Mastering Model Diagnostics in Machine Learning for Reliable AI

Delving Deeper into Model Diagnostics: Ensuring Reliability in Machine Learning

In the rapidly evolving landscape of machine learning (ML), the development of algorithms and models marks only the beginning of a much more intricate process. The subsequent, critical phase is model diagnostics, a step that ensures the reliability and accuracy of machine learning models before they are deployed in real-world scenarios. Given the complexities involved, it’s essential to approach this topic with a detailed perspective, building on our previous discussions on large language models and machine learning.

Understanding the Core of Model Diagnostics

At its core, model diagnostics in machine learning involves evaluating a model to check for accuracy, understand its behavior under various conditions, and identify any potential issues that could lead to inaccurate predictions. This process is crucial, as it directly impacts the effectiveness of models in tasks ranging from anomaly detection to predictive analytics.

One fundamental aspect of diagnostics is the analysis of residuals — the differences between observed and predicted values. By examining residuals, we can uncover patterns or anomalies that indicate issues like overfitting, underfitting, or bias. Such insights enable us to refine our models, ensuring they perform well across diverse datasets and scenarios.

Advanced Techniques in Diagnostics

As we delve deeper into model diagnostics, we encounter more advanced techniques designed to test models thoroughly:

- Variance Inflation Factor (VIF): Used to detect multicollinearity in regression models, where independent variables are highly correlated. High VIF values indicate that feature selection needs refinement.

- Cross-Validation: This technique involves dividing the dataset into several parts and using some for training and the rest for testing. It helps in assessing the model’s performance and generalizability.

- Learning Curves: By plotting training and validation scores against training sizes, learning curves help in determining a model’s learning efficiency and pinpointing issues like overfitting or underfitting.

Challenges and Future Directions

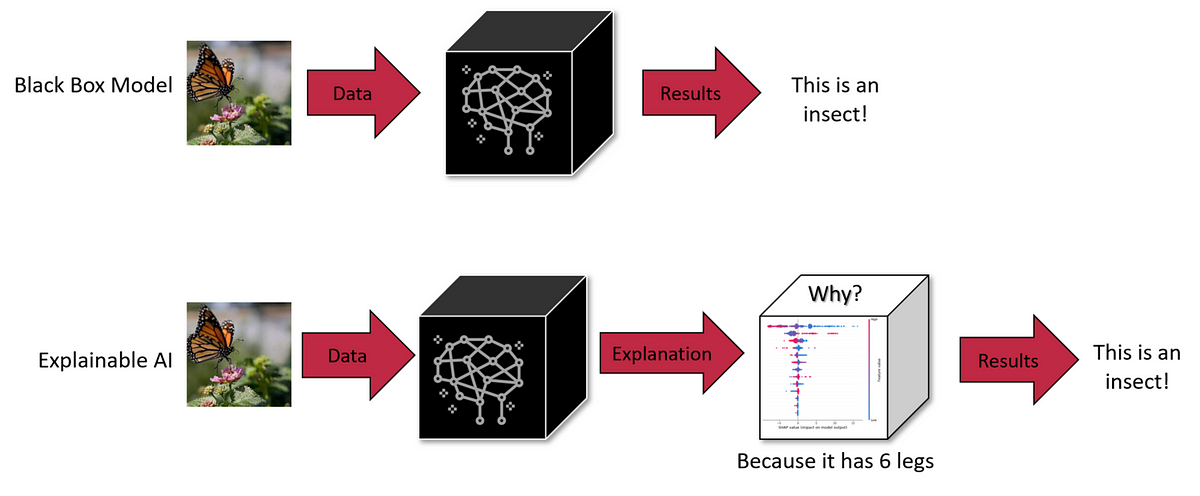

The landscape of model diagnostics is continually evolving, with new challenges emerging as models become more complex. Large language models and deep learning architectures, with their vast number of parameters, introduce unique diagnostic challenges. The black-box nature of such models often makes interpretability and transparency hard to achieve. This has led to a growing focus on techniques like explainable AI (XAI), which aim to make the behaviors of complex models more understandable and their decisions more transparent.

In my journey from developing machine learning algorithms for self-driving robots to consulting on AI and cloud solutions, the importance of robust model diagnostics has been a constant. Whether through my work at DBGM Consulting, Inc., or the algorithms I developed during my time at Harvard University, the lesson is clear: diagnostics are not just a step in the process; they are an ongoing commitment to excellence and reliability in machine learning.

Conclusion

The field of machine learning is as exciting as it is challenging. As we push the boundaries of what’s possible with AI and ML, the role of thorough model diagnostics becomes increasingly critical. It ensures that our models not only perform well on paper but also function effectively and ethically in the real world. The journey towards mastering model diagnostics is complex but deeply rewarding, offering a path to creating AI that is not only powerful but also responsible and reliable.

As we continue to advance in the realms of AI and ML, let’s remain vigilant about the diagnostic processes that keep our models in check, ensuring that they serve humanity’s best interests.