Unveiling the Complex Realms of Machine Learning: Paradigms and Innovations

Deepening Our Understanding of Machine Learning Paradigms: A Journey Beyond the Surface

In the realm of artificial intelligence (AI) and machine learning (ML), the conversation often gravitates towards the surface-level comprehension of technologies and their applications. However, to truly leverage the power of AI and ML, one must delve deeper into the paradigms that govern these technologies. Reflecting on my journey, from mastering machine learning algorithms for self-driving robots at Harvard University to implementing cloud solutions with AWS during my tenure at Microsoft, I’ve come to appreciate the significance of understanding these paradigms not just as abstract concepts, but as the very foundation of future innovations.

Exploring Machine Learning Paradigms

Machine learning paradigms can be broadly classified into supervised learning, unsupervised learning, semi-supervised learning, and reinforcement learning. Each paradigm offers a unique approach to “teaching” machines how to learn, making them suited for different types of problems.

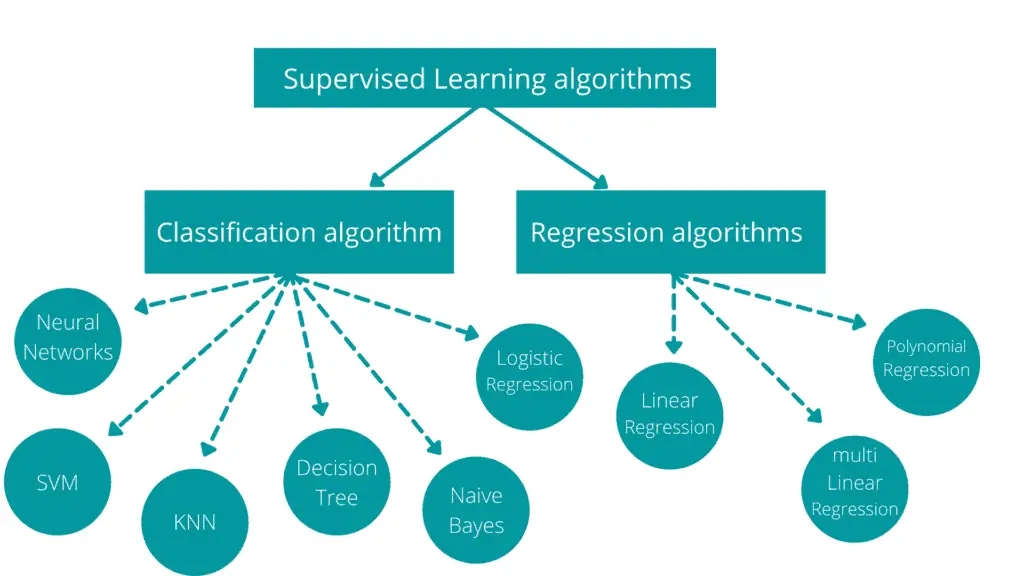

Supervised Learning

Supervised learning involves teaching the model using labeled data. This approach is akin to learning with a guide, where the correct answers are provided, and the model learns to predict outputs based on inputs. Applications range from simple regression models to complex neural networks for image recognition.

Unsupervised Learning

In unsupervised learning, the model learns patterns and structures from unlabeled data. This self-learning capability unveils hidden patterns or data clustering without any external guidance, used in anomaly detection and market basket analysis.

Semi-Supervised Learning

Semi-supervised learning is a hybrid approach that uses both labeled and unlabeled data. This paradigm is particularly useful when acquiring a fully labeled dataset is expensive or time-consuming. It combines the strengths of both supervised and unsupervised learning to improve learning accuracy.

Reinforcement Learning

Reinforcement learning is based on the concept of agents learning to make decisions by interacting with their environment. Through trial and error, the agent learns from the consequences of its actions, guided by a reward system. This paradigm is crucial in robotics, game playing, and navigational tasks.

The Future Direction of Machine Learning Paradigms

As we march towards a future dominated by AI and ML, understanding and innovating within these paradigms will be critical. Large language models (LLMs), a focal point of our previous discussions, are prime examples of supervised and unsupervised learning paradigms pushing the boundaries of what’s possible in natural language processing and generation.

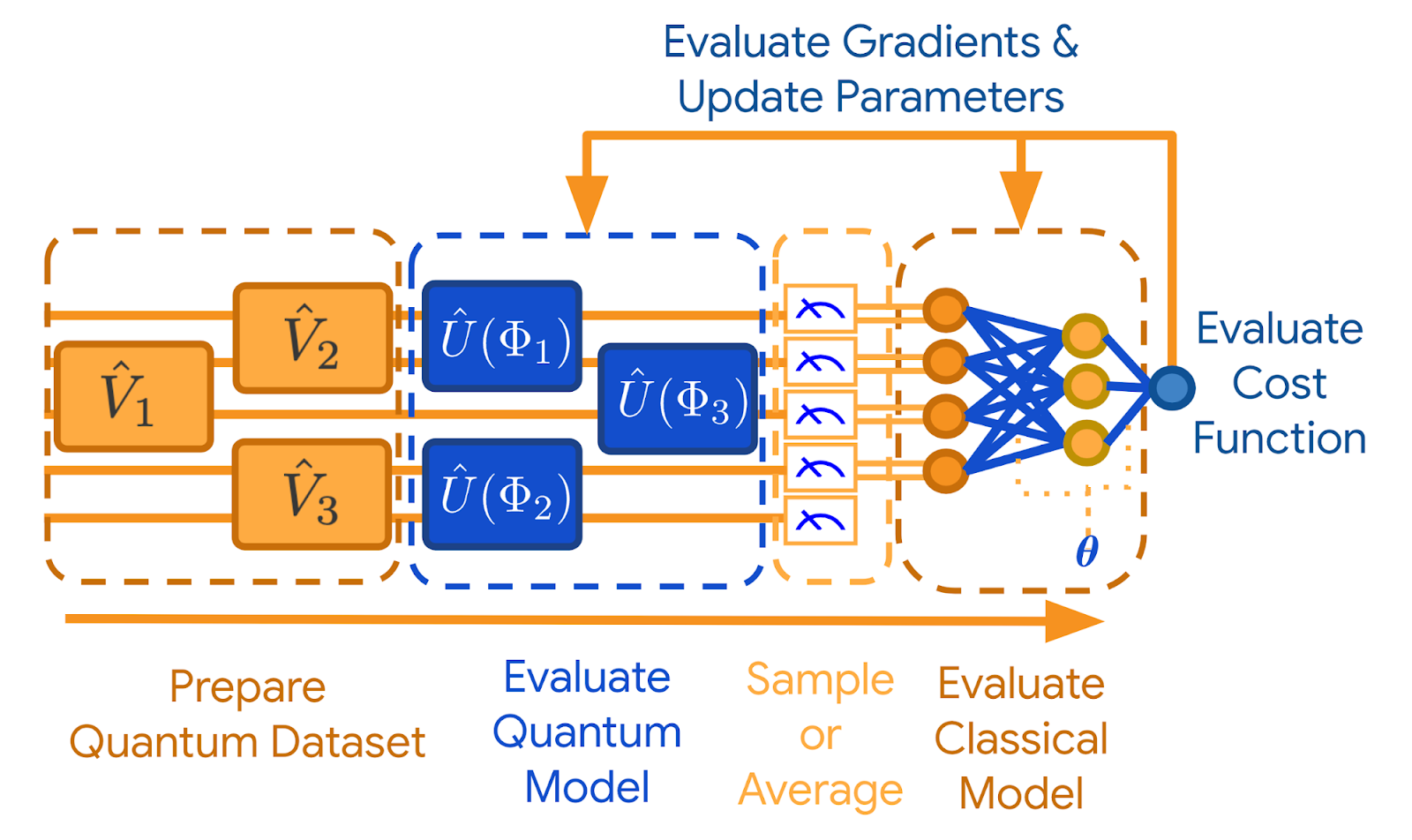

The integration of machine learning with quantum computing presents another exciting frontier. Quantum-enhanced machine learning promises significant speedups in algorithm training times, potentially revolutionizing fields like drug discovery and material science.

Challenges and Ethical Considerations

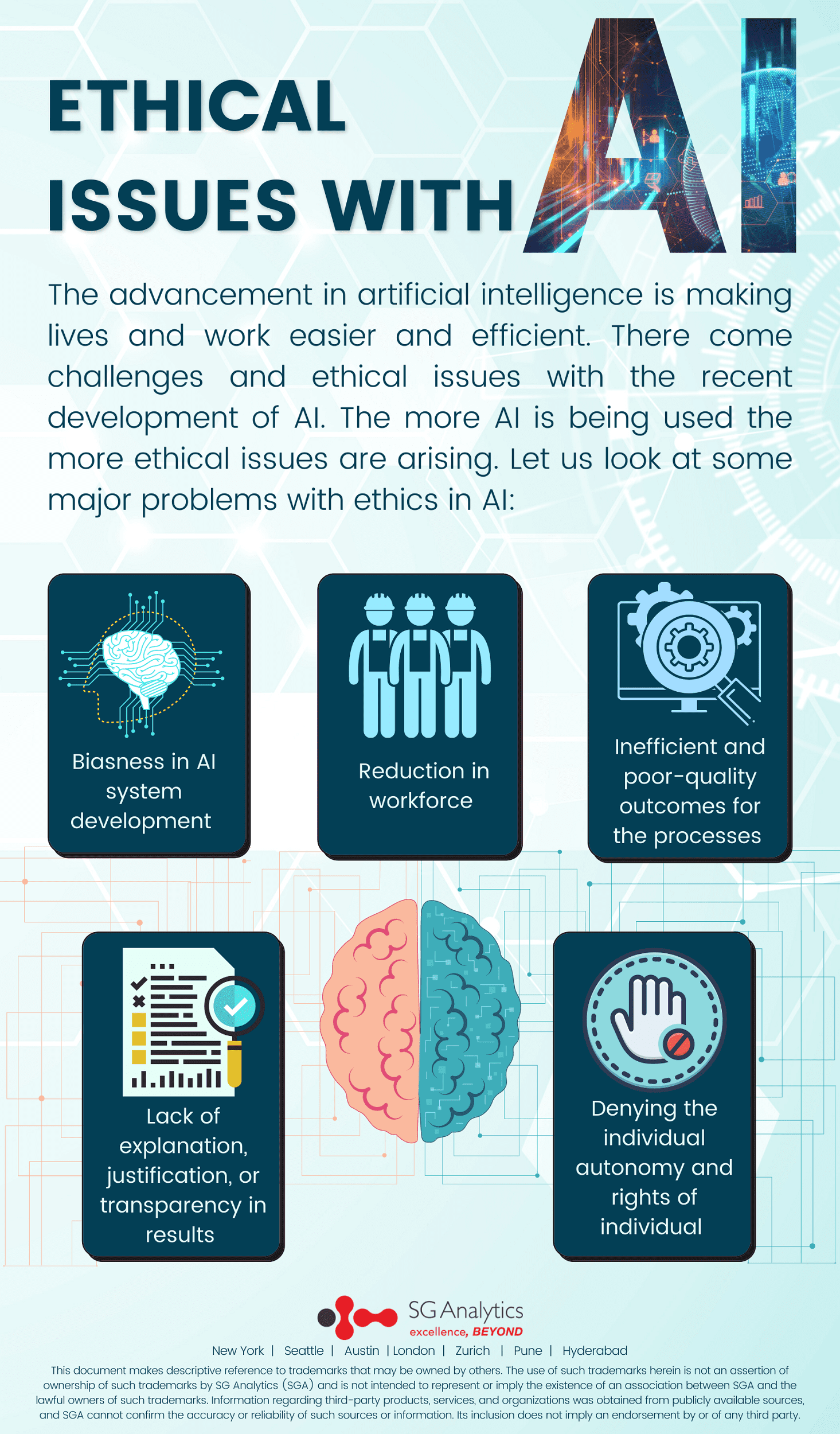

Despite the promising advancements within ML paradigms, challenges such as data privacy, security, and ethical implications remain. The transparency and fairness of algorithms, especially in sensitive applications like facial recognition and predictive policing, require our keen attention and a careful approach to model development and deployment.

Conclusion

The journey through the ever-evolving landscape of machine learning paradigms is both fascinating and complex. Drawing from my experiences and projects, it’s clear that a deeper understanding of these paradigms not only enhances our capability to innovate but also equips us to address the accompanying challenges more effectively. As we continue to explore the depths of AI and ML, let us remain committed to leveraging these paradigms for the betterment of society.

For those interested in diving deeper into the intricacies of AI and ML, including hands-on examples and further discussions on large language models, I invite you to explore my previous articles and share your insights.

To further explore machine learning models and their practical applications, visit DBGM Consulting, Inc., where we bridge the gap between theoretical paradigms and real-world implementations.

Leave a Reply

Want to join the discussion?Feel free to contribute!