Ethical and Security Challenges in Deep Learning’s Evolution

Addressing Ethical and Security Challenges in the Evolution of Deep Learning

In the rapidly advancing landscape of Artificial Intelligence (AI), deep learning stands as a cornerstone technology driving unprecedented innovations across industries. However, recent revelations about significant safety and ethical concerns within top AI research organizations have sparked a global debate on the trajectory of deep learning and its implications for society. Drawing from my experience in AI, machine learning, and security, this article delves into these challenges, emphasizing the need for robust safeguards and ethical frameworks in the development of deep learning technologies.

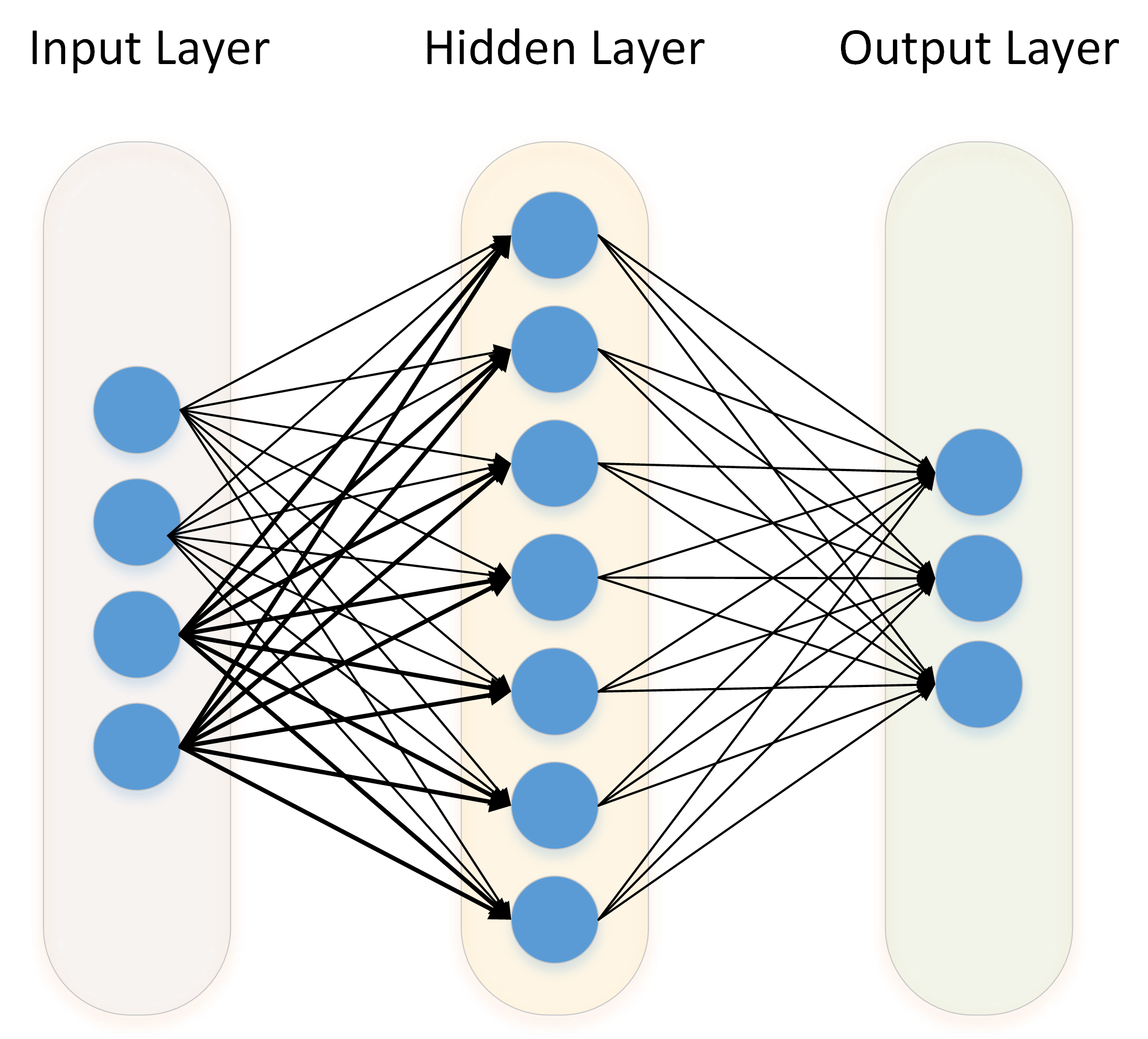

The Dual-Edged Sword of Deep Learning

Deep learning, a subset of machine learning modeled after the neural networks of the human brain, has shown remarkable aptitude in recognizing patterns, making predictions, and decision-making processes. From enhancing medical diagnostics to powering self-driving cars, its potential is vast. Yet, the recent report highlighting the concerns of top AI researchers at organizations like OpenAI, Google, and Meta over the lack of adequate safety measures is a stark reminder of the dual-edged sword that deep learning represents.

The crux of the issue lies in the rapid pace of advancement and the apparent prioritization of innovation over safety. As someone deeply ingrained in the AI field, I have always advocated for balancing progress with precaution. The concerns cited in the report resonate with my perspective that while pushing the boundaries of AI is crucial, it should not come at the expense of security and ethical integrity.

Addressing Cybersecurity Risks

The report’s mention of inadequate security measures to resist IP theft by sophisticated attackers underlines a critical vulnerability in the current AI development ecosystem. My experience in cloud solutions and security underscores the importance of robust cybersecurity protocols. In the context of AI, protecting intellectual property and sensitive data is not just about safeguarding business assets; it’s about preventing potentially harmful AI technologies from falling into the wrong hands.

Ethical Implications and the Responsibility of AI Creators

The potential for advanced deep learning models to be fine-tuned or manipulated to pass ethical evaluations poses a significant challenge. This echoes the broader issue of ethical responsibility in AI creation. As someone who has worked on machine learning algorithms for self-driving robots, I am acutely aware of the ethical considerations that must accompany the development of such technologies. The manipulation of AI to pass evaluations not only undermines the integrity of the development process but also poses broader societal risks.

Drawing Lessons from Recent Critiques

In light of the concerns raised by AI researchers, there is a pressing need for the AI community to foster a culture of transparency and responsibility. This means emphasizing the implementation of advanced safety protocols, conducting regular ethical reviews, and prioritizing the development of AI that is secure, ethical, and beneficial for society. The lessons drawn from the discussions around supervised learning, Bayesian probability, and the mathematical foundations of large language models—as discussed in my previous articles—reinforce the idea that a solid ethical and mathematical foundation is essential for the responsible advancement of deep learning technologies.

The urgency to address these challenges is not merely academic but a practical necessity to ensure the safe and ethical evolution of AI. As we stand on the brink of potentially realizing artificial general intelligence, the considerations and protocols we establish today will shape the future of humanity’s interaction with AI.

In conclusion, the report from the U.S. State Department is a critical reminder of the need for the AI community to introspect and recalibrate its priorities towards safety and ethical considerations. As a professional deeply involved in AI’s practical and theoretical aspects, I advocate for a balanced approach to AI development, where innovation goes hand in hand with robust security measures and ethical integrity. Only by addressing these imperative challenges can we harness the full potential of deep learning to benefit society while mitigating the risks it poses.

Focus Keyphrase: ethical and security challenges in deep learning

I penned this article to initiate a dialogue on the often-overlooked ethical and security facets that accompany the rapid advancement of deep learning technologies. Drawing from personal experiences and recent reports, my objective is to underscore the pragmatic necessity of instituting robust ethical and safety protocols in AI development. I believe that awareness and responsible action by the AI community are pivotal in navigating these challenges, ensuring that the benefits of deep learning are realized without compromising on security and ethical integrity.

While the insights provided are undoubtedly thought-provoking, I remain a bit skeptical. I agree with the importance of ethical and security considerations in AI, particularly in deep learning. However, what tangible steps do you propose we take to confront these issues? In my experience, theoretical discussions are often miles apart from actionable solutions, and the pace at which AI is advancing seems to dwarf the speed of regulatory or ethical framework development. How can we, as practitioners and stakeholders in the field, practically ensure that these ethical and security challenges are more than just talking points?